Constructing Agent Metrics

The invocation metrics object should include a maximum of 50 invocation metrics per request, or the total request size should not exceed 5 MB.

You can instrument your AI agent application to collect invocation metrics and export them to a centralized metrics ingestion endpoint. Once integrated, you can monitor agent performance, including latency, token usage, tool calls, errors, and guardrail activity, for analytics purposes.

The integration works with any framework, regardless of which one you choose. Whether you are using CrewAI, LangChain, LlamaIndex, OpenAI Agents SDK, AutoGen, or any other orchestration layer, the approach remains the same: wrap the agent invocation with lightweight instrumentation, collect the metrics, and send a single JSON payload to the endpoint after each run, or batch and send an array of JSON metrics.

Supported Frameworks: This applies to all supported agent providers, including CrewAI, LangChain, LangGraph, LlamaIndex, OpenAI Agents SDK, Microsoft AutoGen, Semantic Kernel, PydanticAI, SmolAgents, Strands Agents, Mastra, and others. See for framework-specific extraction guidance.

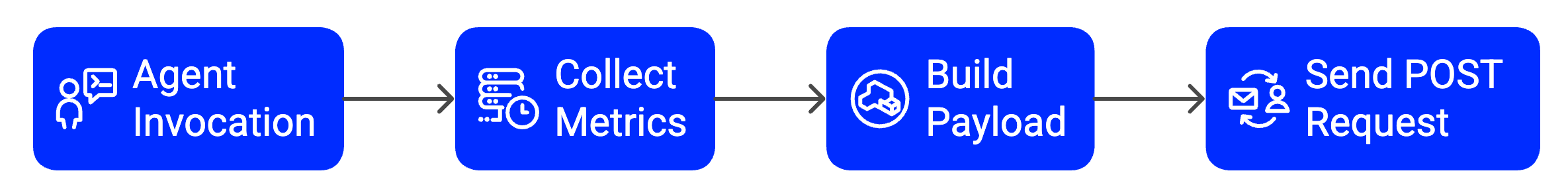

Integration Flow

The integration follows a four-stage pipeline that runs alongside your existing agent logic:

- Agent Invocation — Your agent runs as usual, with no changes to business logic.

- Collect Metrics — During execution, capture start and end timestamps, token usage from the LLM, tool call results, and any errors.

- Build Payload — After execution completes, structure the collected data into the resourceMetrics JSON format. Refer Metric payload schema

- POST to Endpoint — Send the payload to the ingestion endpoint using a single HTTPS POST request.

- Design Principle: The instrumentation is non-blocking — it won't pause or delay your agent's execution. Run your agent as normal and send metrics after each invocation completes. The POST request must not be part of the critical execution path and should not impact the execution flow.

Metrics Payload Schema

Every POST request to the ingestion endpoint must include a JSON body containing a top-level resourceMetrics array. Each element in this array represents a single agent invocation.

Envelope

{

"resourceMetrics": [ <ResourceMetric>, ... ]

}

ResourceMetric Object

| Field | Type | Required | Description |

|---|---|---|---|

| extAccountAliasId | string | Required | Your account UUID provided during onboarding. |

| providerType | string | Required | Agent framework constant (e.g. CREWAI, LANGCHAIN). Refer Framework Specific Examples. |

| operation | string | Required | Operation type. Use InvokeAgent for standard agent runs. |

| sessionId | string | Required | A unique UUID (v4) per run. Generated fresh for every invocation. |

| schemaVersion | string | Required | Payload version. Always send "1.0.0". |

| time | number | Required | Unix timestamp in milliseconds at invocation start. Example: 1775730591000 |

| extModelId | string | Optional | LLM model identifier (e.g. gpt-4o, claude-3-5-sonnet, llama3.2). |

| promptType | string | Optional | Prompt classification. Common values: CHAT, COMPLETION, Manual. |

| totalTime | number | Optional | Total wall-clock time of the invocation in milliseconds. |

| ttft | number | Optional | Time-to-first-token in milliseconds. Send 0 if streaming is not used or not measurable. |

| modelLatency | number | Optional | Time spent inside the LLM call in milliseconds, excluding tool execution time. |

| modelInvocationCount | integer | Optional | Total LLM calls made during this run. Increment if your agent loops. Default: 1 |

| inputTokenCount | integer | Optional | Total prompt/input tokens across all LLM calls in this run. Default: 0 |

| outputTokenCount | integer | Optional | Total completion/output tokens across all LLM calls in this run. Default: 0 |

| invocationServerErrors | integer | Optional | Count of HTTP 5xx errors during the agent invocation. Default: 0 |

| invocationClientErrors | integer | Optional | Count of HTTP 4xx errors during the agent invocation. Default: 0 |

| modelInvocationThrottles | integer | Optional | Count of HTTP 429 rate-limit responses from the LLM provider. Default: 0 |

| modelInvocationClientErrors | integer | Optional | LLM API-level 4xx errors. Default: 0 |

| modelInvocationServerErrors | integer | Optional | LLM API-level 5xx errors and connection failures. Default: 0 |

| modelInvocationUnknownErrors | integer | Optional | Errors not fitting other categories. Default: 0 |

| guardrailHits | integer | Optional | Number of times a guardrail, content filter, or safety check triggered. Default: 0 |

| costUsage | number | Optional | Total cost of the agent invocation in USD, rounded to 2 decimal places. Omit or set to null if not applicable. |

| tools | array | Optional | Array of ToolMetric objects. Omit if your agent used no tools. Refer Tool Metric Object |

All fields use camelCase. Always include fields marked as Required.

ToolMetric Object

Include one ToolMetric entry per tool category used.

| Field | Type | Description |

|---|---|---|

| toolType | string | Category. Use "api" for REST/HTTP tools and "mcp" for MCP tools. |

| toolCalls | integer | Total number of calls attempted for this tool type. |

| successCount | integer | Number of calls that completed successfully. |

| failureCount | integer | Number of calls that resulted in an error or exception. |

Integration Guide

Follow these five steps to instrument any agent framework. The approach remains the same across frameworks — only the API calls you use to extract data will differ.

1. Track Session and Timing

Before invoking your agent, capture the start time and generate a unique session ID. After the agent completes, record the end time.

import uuid, time

# Before agent call

session_id = str(uuid.uuid4()) # unique per run

start_time = time.perf_counter() # monotonic — for duration

start_epoch = int(time.time() * 1000) # Unix ms — for 'time' field

# ... run your agent ...

# After agent call

end_time = time.perf_counter()

total_time = (end_time - start_time) * 1000 # milliseconds

Use time.perf_counter() (Python) or performance.now() (JavaScript) to measure durations, because system clock changes do not affect them. Use time.time() or Date.now() only for absolute timestamps.

2. Capture LLM Token Usage

Most agent frameworks expose token usage within the invocation response. Extract them from the response object after the execution completes.

| Framework | Input Tokens | Output Tokens |

|---|---|---|

| CrewAI | result.token_usage.prompt_tokens | result.token_usage.completion_tokens |

| LangChain / LangGraph | result.usage_metadata["input_tokens"] | result.usage_metadata["output_tokens"] |

| LlamaIndex | response.metadata["token_usage"].prompt_tokens | response.metadata["token_usage"].completion_tokens |

| OpenAI Agents SDK | result.raw_responses[-1].usage.input_tokens | result.raw_responses[-1].usage.output_tokens |

| Microsoft AutoGen | response.usage.prompt_tokens | response.usage.completion_tokens |

| Semantic Kernel | result.metadata["usage"].prompt_tokens | result.metadata["usage"].completion_tokens |

| PydanticAI | result.usage().request_tokens | result.usage().response_tokens |

| Mastra / AI SDK (JS) | result.usage.promptTokens | result.usage.completionTokens |

| Custom / Other | Parse usage fields from raw LLM API response |

Agentic Loops: If your agent executes multiple LLM calls in a single run (e.g., ReAct-style reasoning), aggregate token usage across all calls and report totals. Also increment modelInvocationCount for each LLM call made.

3. Count Tool Calls

Iterate through all tool calls executed by your agent and categorize them by type. Most frameworks expose tool calls through the final result object or event/callback hooks.

# Pseudo-code — adapt field names to your framework

api_calls = api_success = api_failure = 0

mcp_calls = mcp_success = mcp_failure = 0

for tool_call in result.tool_calls: # framework-specific iteration

if tool_call.type == "api":

api_calls += 1

if tool_call.error:

api_failure += 1

else:

api_success += 1

elif tool_call.type == "mcp":

mcp_calls += 1

if tool_call.error:

mcp_failure += 1

else:

mcp_success += 1

If your framework does not expose per-call success or failure details, count all calls as successful and set failureCount at 0.

4. Classify Errors

Wrap the agent invocation in a try/except (or equivalent) block and map any exceptions to the appropriate error counters.

try:

result = agent.run(input)

except RateLimitError: # HTTP 429

model_invocation_throttles = 1

except ServerError as e: # HTTP 5xx

if e.status_code >= 500:

invocation_server_errors = 1

except ClientError as e: # HTTP 4xx

if e.status_code >= 400:

invocation_client_errors = 1

except ConnectionError: # Network failure

model_invocation_server_errors = 1

except Exception: # Anything else

model_invocation_unknown_errors = 1

Always send metrics, even when an error occurs. Error metrics are still valuable. If you catch an exception, build and send the payload and set the appropriate error counter to 1. Set all other unknown numeric fields to 0.

5. Send Metrics to the Endpoint

After you collect and compute all metrics, assemble them into the final payload and send a POST request to the ingestion endpoint.

Authentication & Configuration

Required HTTP Headers

| Header | Value | Notes |

|---|---|---|

| Content-Type | application/json | Always required |

| Authorization | Basic BOOMI_TOKEN.user@boomi.com:api-token | Platform API token you create for authentication |

| Origin | Your application origin | Optional. Required only if the endpoint enforces CORS policy |

Recommended Environment Variables

| Variable | Description | Example |

|---|---|---|

| AI_METRICS_ENDPOINT | Full URL of the ingestion endpoint | https://api.example.com/v1/metrics |

| AI_METRICS_ORIGIN | Origin header value (optional) | https://app.your-agent.com |

| EXT_ACCOUNT_ALIAS_ID | Your account UUID from onboarding | 3362d163-b990-49a6-... |

| PROVIDER_TYPE | Your agent framework identifier | LANGCHAIN |

Retry on Transient Failures: Implement exponential backoff with at least 3 retry attempts. Retry the request only for the following HTTP status codes: 429, 500, 502, 503, 504

Framework-Specific Examples

Provider Type Constants

Use the exact providerType constant that matches your framework:

| Constant | Framework Name |

|---|---|

| CUSTOM_PROVIDER | Custom / bring-your-own framework |

| ADOBE_EXPERIENCE_PLATFORM_AGENT_ORCHESTRATOR | Adobe Experience Platform Agent Orchestrator |

| AG2 | AG2 |

| AGENT_GARDEN | Agent Garden |

| AGNO | Agno |

| AISDK | AI SDK (Vercel) |

| AKKIO | Akkio |

| AUTO_GPT | Auto-GPT |

| BABYAGI | BabyAGI |

| BEDROCK | Amazon Bedrock |

| CAMEL_AI | CAMEL-AI |

| CHATFUEL | ChatFuel |

| CLOUDFLARE_AGENTS | Cloudflare Agents |

| CREWAI | CrewAI |

| DATAROBOT_NO_CODE_AI_APPS | DataRobot No-Code AI Apps |

| DIFY | Dify |

| GOOGLE_AGENT_DEVELOPMENT_KIT | Google Agent Development Kit |

| HUGGING_FACE_TRANSFORMERS_AGENTS | Hugging Face Transformers Agents |

| IBM_WATSONX_ASSISTANT | IBM Watsonx Assistant |

| KUBIYA_AI | Kubiya.ai |

| LANGCHAIN | LangChain |

| LANGFLOW | Langflow |

| LANGGRAPH | LangGraph |

| LLAMAINDEX | LlamaIndex |

| LYZR | Lyzr |

| MASTRA | Mastra |

| METAGPT | MetaGPT |

| MICROSOFT_AUTOGEN | Microsoft AutoGen |

| MICROSOFT_COPILOT_STUDIO | Microsoft Copilot Studio |

| MICROSOFT_SEMANTIC_KERNEL | Microsoft Semantic Kernel |

| N8N | n8n |

| OPENAI_AGENTS_SDK | OpenAI Agents SDK |

| OPENAI_CHATGPT_TEAM_ENTERPRISE | OpenAI / ChatGPT Team / Enterprise |

| PHIDATA | Phidata |

| PYDANTIC_AI | PydanticAI |

| RASA | Rasa |

| SALESFORCE_AGENTFORCE | Salesforce Agentforce |

| SERVICENOW_AI_AGENTS | ServiceNow AI Agents |

| SMOLAGENTS | SmolAgents |

| STRANDS_AGENTS | Strands Agents |

| SUPERAGI | SuperAGI |

| WORKDAY_AI_AGENTS | Workday AI Agents |

Token & Tool Extraction by Framework

- CrewAI

result = crew.kickoff(inputs={"user_input": query})

# Token counts

input_tokens = result.token_usage.prompt_tokens

output_tokens = result.token_usage.completion_tokens

# Tool calls — iterate task messages

for task_output in result.tasks_output:

for msg in (task_output.messages or []):

for tc in (msg.get("tool_calls") or []):

tool_name = tc.get("function", {}).get("name", "")

# classify tool_name as api or mcp

- LangChain / LangGraph

from langchain_core.callbacks import BaseCallbackHandler

class MetricsCallback(BaseCallbackHandler):

def on_llm_end(self, response, **kwargs):

usage = response.llm_output.get("token_usage", {})

self.input_tokens += usage.get("prompt_tokens", 0)

self.output_tokens += usage.get("completion_tokens", 0)

self.invocation_count += 1

def on_tool_end(self, output, **kwargs):

self.tool_success += 1

def on_tool_error(self, error, **kwargs):

self.tool_failure += 1

- OpenAI Agents

from agents import Agent, Runner

result = await Runner.run(agent, input=query)

# Token usage

usage = result.raw_responses[-1].usage

input_tokens = usage.input_tokens

output_tokens = usage.output_tokens

# Tool calls

for item in result.new_items:

if hasattr(item, "type") and item.type == "tool_call_item":

# classify by item.raw_item.name

pass

- LlamaIndex

from llama_index.core import Settings

from llama_index.core.callbacks import CallbackManager, TokenCountingHandler

token_counter = TokenCountingHandler()

Settings.callback_manager = CallbackManager([token_counter])

response = query_engine.query(query)

input_tokens = token_counter.prompt_llm_token_count

output_tokens = token_counter.completion_llm_token_count

- Microsoft AutoGen

# After: chat_result = user_proxy.initiate_chat(...)

# Parse chat_result.chat_history for usage blocks in messages:

for msg in chat_result.chat_history:

if "usage" in msg:

input_tokens += msg["usage"].get("prompt_tokens", 0)

output_tokens += msg["usage"].get("completion_tokens", 0)

- Custom / OpenAI-compatible API

raw = llm_client.chat.completions.create(...)

input_tokens = raw.usage.prompt_tokens

output_tokens = raw.usage.completion_tokens

Complete Payload Example

A fully-populated resourceMetrics payload for a single invocation with tools:

{

"resourceMetrics": [

{

"extAccountAliasId": "3362d163-b990-49a6-b53d-ffbbaa536ada",

"providerType": "CREWAI",

"operation": "InvokeAgent",

"extModelId": "GPT",

"promptType": "CHAT",

"totalTime": 65.526,

"ttft": 1745848680506,

"modelLatency": 3700,

"modelInvocationCount": 1,

"inputTokenCount": 377,

"outputTokenCount": 233,

"invocationServerErrors": 0,

"invocationClientErrors": 0,

"modelInvocationThrottles": 0,

"modelInvocationClientErrors": 0,

"modelInvocationServerErrors": 0,

"modelInvocationUnknownErrors":0,

"guardrailHits": 3,

"costUsage": 0.01,

"sessionId": "03d3987e-362a-4fa1-848f-fe34e8a7d188",

"tools": [

{ "toolType": "api", "toolCalls": 3, "successCount": 2, "failureCount": 1 },

{ "toolType": "mcp", "toolCalls": 2, "successCount": 2, "failureCount": 0 }

],

"time": 1775730591000,

"schemaVersion": "1.0.0"

}

]

}

Validation & Troubleshooting

Pre-Ship Checklist

- POST request returns HTTP 200 or 202.

- All Required fields are present and non-null.

timeis a 13-digit Unix timestamp in milliseconds.- Token counts are non-negative integers (

0is valid). - Send metrics even when the agent throws an exception.

Authorizationheader is correctly configured.

Common Issues

| Symptom | Likely Cause | Fix |

|---|---|---|

| HTTP 401 Unauthorized | Missing or expired JWT token | Set AI_METRICS_JWT_TOKEN and ensure the token is valid and not expired |

| HTTP 400 Bad Request | Missing required field or incorrect data type | Ensure all Required fields (Refer Resource Metric Object) are included and properly typed |

| Connection timeout | Incorrect endpoint URL or network issue | Verify AI_METRICS_ENDPOINT is correct and reachable; check firewall or network restrictions |

Quick Smoke Test

Validate your endpoint and credentials without running a real agent using curl:

curl -X POST "<AI_METRICS_ENDPOINT>" \

-H "Content-Type: application/json" \

-H "Authorization: Basic BOOMI_TOKEN.user@boomi.com:api-token" \

-d '{

"resourceMetrics": [{

"extAccountAliasId": "<refer-from-api-specification>",

"providerType": "<refer-from-api-specification>",

"operation": "InvokeAgent",

"sessionId": "test-session-001",

"time": 1775730591000,

"schemaVersion": "1.0.0",

"invocationServerErrors": 0,

"invocationClientErrors": 0,

"modelInvocationCount": 1,

"modelInvocationThrottles": 0,

"modelInvocationClientErrors": 0,

"modelInvocationServerErrors": 0,

"modelInvocationUnknownErrors": 0,

"guardrailHits": 0,

"costUsage": 0.01

}]

}

A 200 or 202 response confirms your credentials and endpoint URL are correct.